COVID-19: Capgemini on unlocking the true potential of data

Crises are often catalysts for change and COVID-19 is no different. The pandemic has increased our reliance on digital technologies and dependency on data on a vast scale, leading to years of transformation taking place in just a few months.

But this has also presented a data dilemma.

On the one hand, data has underpinned every aspect of our response to the pandemic, helping international bodies and national governments to understand the spread of the virus and the best route to recovery; for example, the search for a vaccine thrives on the free flow and sharing of data. Also, enterprises rely on data to adjust as well as they can to the reality of a highly virtualized, yet volatile and unpredictable business environment. On the other hand, data-informed decisions are being met with skepticism, with citizens and academics often failing to believe in the quality and accuracy of the virus-related data presented. And the situation is nothing much better within the corporate boundaries, even with data being pivotal to key business processes.

This isn’t anything new. Both public health bodies and businesses have been trying to create insights for years based on broad and generic data sets that are often incomplete, inaccurate or don’t have the necessary granularity to enable an effective leverage. Understandably, this has led to scrutiny of and questions about the way data is sourced, used, and activated, making people doubt its very reliability.

When used correctly, data is an organization’s most valuable asset. To unlock its true potential, we must look at how organizations are utilizing data and how they can adapt their data practices to an evolving situation. But first, this means correctly identifying and removing some of the roadblocks that still stand in the way of a truly resilient, data-powered business.

The data paradox

Organizations know that data delivers much-needed intelligence and insight – yet COVID-19 has highlighted fundamental areas of weakness and as such, where data is failing us.

Restricted access to information, lack of delegation, confusion over what exists and how to extract insight, combined with the inability to manage data in real-time has meant many businesses are struggling to handle the basic elements of digital collaboration, business continuity and data management. This means that any decision made based-off of that data could set them up for failure and leave way for competitors to take the lead.

To understand end-to-end data processing in a more efficient way, businesses need to identify where blockages are holding them back, causing performance and latency issues, and understand the root causes responsible for this. To do so means engendering an agile data strategy that meets current demands – looking at how data is sourced, stored and governed, and how to maintain a strong, highly automated, data ecosystem that leaves little to no room for error.

Below, I look at four keys strategies that businesses need to employ to first untangle the data dilemma and then set them up for a successful data-future in a (post) COVID world.

Re-architecting outdated processes

Gaining access to the right data to pilot the business through a crisis is typically hindered or blocked by existing IT landscapes and processes. By re-architecting outdated applications to automated solutions, it not only increases ease of access and enables the right management to eliminate inefficiencies during data processing, but it also accelerates scalability, agility, performance, and visibility. In turn, this facilitates greater understanding of how to activate data while improving existing data processes both from a compliance and performance perspective.

A new focus on data hygiene

The automation of decisions is dependent on having massive amounts of data to train algorithms. Currently most organizations don’t have very good hygiene levels when it comes to data management, nor the skills to enable this level of independent intelligence, leading to bottlenecks and inefficiencies. To unravel this data puzzle, organizations need to address how their data is sourced, stored, and used so that they can accelerate their digital transformation with the future in mind and evolve with clear visualization, contextualization, and agility.

Reimagining the data ecosystem

A “fail fast” approach is needed to adopt big data initiatives, exploring new data sets and usage rapidly to see if they add value. The importance of this lies in helping organizations better anticipate future scenarios.

Organizations should embrace agility, encourage experimentation, and treat failure as a lesson rather than something to be feared. Through ongoing testing – aligned to wider business goals – organizations will quickly create data-powered processes that produce informed outcomes and enable more intelligent, more automated decision-making.

The importance of a data culture

Business leaders have a rare opportunity right now to entrench data-powered decision making into business processes to create a data culture, rethink their business models and adopt new ways of working. Employees must feel that they can rapidly access the information they need – as and when they need it – and while the demand for information and data-powered processes rises, having the right culture in place to embrace this will further increase efficiency.

Ultimately, these unprecedented times can be seen as an opportunity for organizations to activate the wealth of data they have, gaining the capability to know more about their competitors, their customers, and the world in which they operate – but this relies on the accurate and fair management of data. Only by unlocking the full value of data, in the right way, will this lead to new insights that help predict market dynamics, anticipate social trends, identify consumer-preferences, and manage risks.

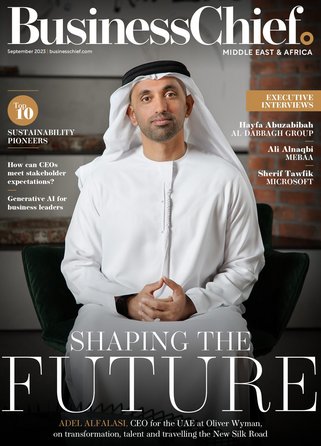

For more information on business topics in Europe, Middle East and Africa please take a look at the latest edition of Business Chief EMEA.